PHOTO

- SSLMs have a low memory cost and don’t require additional memory to generate arbitrary long blocks of text

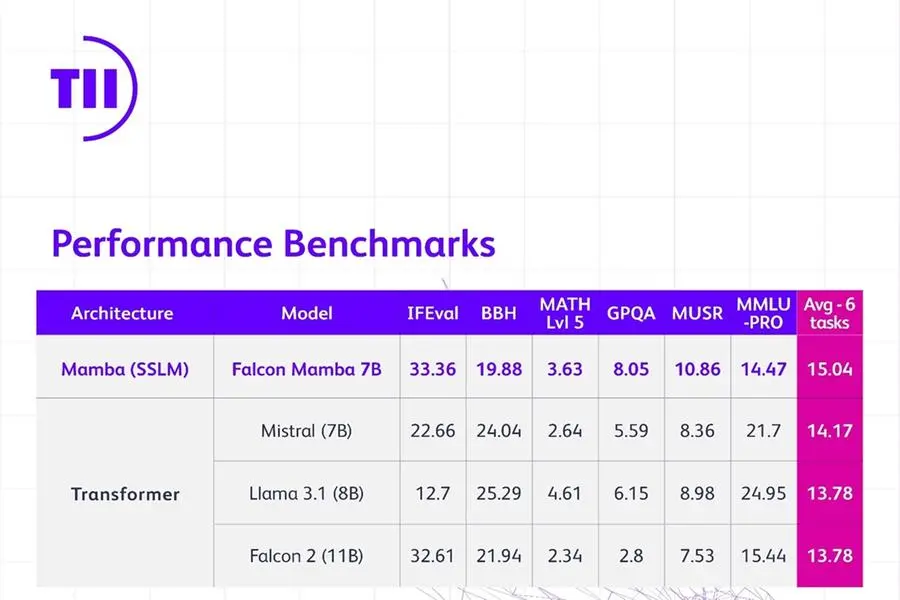

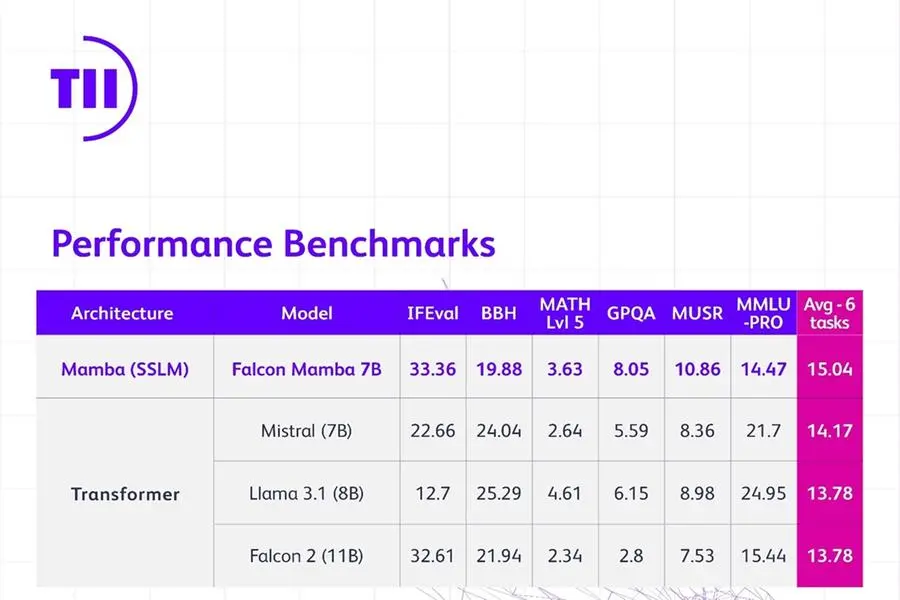

- Falcon Mamba 7B also outperforms traditional transformer architecture models such Meta’s Llama 3.1 8B and Mistral’s 7B

- New model reflects the innovation and pioneering approach of Abu Dhabi in AI research and development

Abu Dhabi, United Arab Emirates: The Technology Innovation Institute (TII), a leading global scientific research center and the applied research pillar of Abu Dhabi’s Advanced Technology Research Council (ATRC), has released a new large language model in its Falcon series, the Falcon Mamba 7B. The new model is the no. 1 globally performing open source State Space Language Model (SSLM) in the world, as independently verified by Hugging Face.

As the first SSLM for Falcon, it departs from prior Falcon models which all use a transformer-based architecture. This new Falcon Mamba 7B model is yet another example of the pioneering research the institute is conducting and the breakthrough tools and products it makes available to the community in an open source format.

H.E. Faisal Al Bannai, Secretary General of ATRC and Adviser to the UAE President for Strategic Research and Advanced Technology Affairs, said: “The Falcon Mamba 7B marks TII’s fourth consecutive top-ranked AI model, reinforcing Abu Dhabi as a global hub for AI research and development. This achievement highlights the UAE’s unwavering commitment to innovation.”

For transformer architecture models, Falcon Mamba 7B outperforms Meta’s Llama 3.1 8B, Llama 3 8B, and Mistral’s 7B on the newly introduced benchmarks from HuggingFace. Meanwhile for the other SSLMs, Falcon Mamba 7B beats all other open source models in the old benchmarks and it will be the be first model on HuggingFace’s new tougher benchmark leaderboard.

Dr. Najwa Aaraj, Chief Executive of TII, said: “The Technology Innovation Institute continues to push the boundaries of technology with its Falcon series of AI models. The Falcon Mamba 7B represents true pioneering work and paves the way for future AI innovations that will enhance human capabilities and improve lives.”

State Space models are extremely performant at understanding complex situations that evolve over time, such as a whole book. This is because SSLMs do not require additional memory to digest such large bits of information.

Transformer based models, on the other hand, are very efficient at remembering and using information they have processed earlier in a sequence. This makes them very good at tasks like content generation, however, because they compare every word with every other word, this requires significant computational power.

SSLMs can find applications in various fields such as estimation, forecasting, and control tasks. Similar to the transformer architecture models, they also excel in Natural Language Processing tasks and can be applied to machine translation, text summarization, computer vision, and audio processing.

Dr. Hakim Hacid, Acting Chief Researcher of the TII’s AI Cross-Center Unit, said: “As we introduce the Falcon Mamba 7B, I’m proud of the collaborative ecosystem of TII that nurtured its development. This release represents a significant stride forward, inspiring fresh perspectives and further fueling the quest for intelligent systems. At TII, we’re pushing the boundries of both SSLM and transformer models to spark further innovation in generative AI.”

Falcon LLMs have been downloaded over 45 million times, proving the outstanding success of the models. Falcon Mamba 7B will be released under TII Falcon License 2.0, the permissive Apache 2.0-based software license which includes an acceptable use policy that promotes the responsible use of AI. More information on the new model can be found at FalconLLM.TII.ae.

*Source: AETOSWire

Contacts:

Jennifer Dewan, Senior Director of Communications

Jennifer.dewan@tii.ae